Agentic coding does something strange.

It makes indecision cheap.

You’re no longer choosing between Product Path A or Product Path B.

You’re shipping A and B before your coffee cools.

So the question changes from:

“What’s the right decision?”

to:

“What’s the fastest way to test both?”

That shift sticks.

It’s hard to go back to “classical coding” after that. Feels like downgrading from live GPS to printed MapQuest directions.

—

We’ve seen this movie before.

Around ~2004, companies still ran feasibility tests.

Safe. Contained. Respectable.

Then the internet scaled. Distribution got weirdly efficient. And someone asked:

“If it costs the same to run a feasibility test as it does to launch the real thing, why not just launch the real thing?”

So we did.

Feasibility tests faded. “Proof of concept” (PoC) took their place. Real products shipped earlier.

—

This is that moment again.

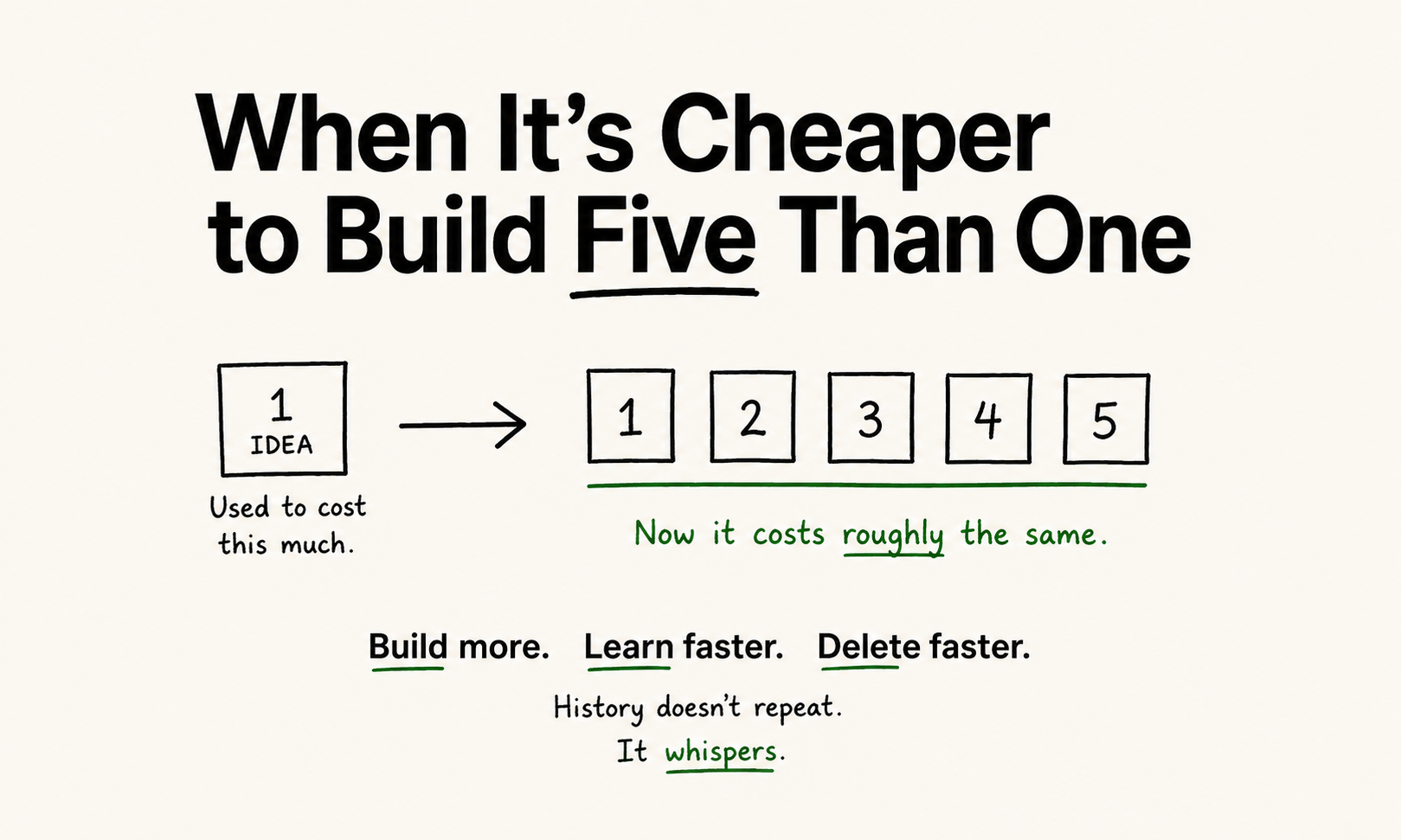

If it used to costs $5000 to launch a feature. And now it costs roughly the same to build 5 features. Then:

- build 5

- ship 5

- instrument/trsck everything

- watch what users actually touch

Not what they say.

What they touch.

—

This creates a new instinct:

- shipping is thinking

- observability is judgment

- deprecation is hygiene

Because if you ship 5, you need to be ready to kill 4.

No ceremony.

Otherwise you wake up maintaining a digital antique shop. Think eBay inertia.

—

So the pattern:

build more than you need. learn faster than you build. delete faster than you regret.

History doesn’t repeat.

But it does whisper:

“If the cost drops, your behavior should too.”

Lets Discuss Counterargument (and where this breaks)

There’s a real risk hiding here:

more features ≠ more insightmore speed ≠ more clarity

If you build 5 things at once:

- signals get noisy

- causality gets blurry

- execution gets shallow

- teams feel fast but learn slow

Speed, without structure, turns into expensive confusion.

—

There’s also the user side.

Users don’t love churn.

If things appear, change, and disappear too quickly:

- trust drops

- mental models break

- your product feels unstable

Consistency still matters.

—

And one more quiet friction:

your brain didn’t get faster just because your tools did.

Context-switching is still real.

Taste is still scarce.

Deletion is still political.

—

How to not step into that trap

Keep the speed.

Add discipline:

- each feature = one clear hypothesis

- each feature = one success metric

- each feature = killable in days

So it’s not:

“build 5 ideas”

It’s:

“run 5 clean experiments”

—

Stabilize the core.

Experiment at the edges.

Signal what’s new. Kill what doesn’t work.

—

Or more simply:

build more, but learn cleanship faster, but keep your center stablestart aggressively, but delete even more aggressively

That’s the version that scales.

The rest turns into Craigslist with better branding.