I came in thinking this was a software problem.

It's not. It's a physics problem dressed up as a software problem. That distinction took me 72 hours to earn.

The Idea Seemed Obvious

MidJourney changed what it means to generate an image. Type a prompt, get something usable in seconds.

I wanted that — but for robot training environments. Type a scene, get a simulation. Drop a robot in. Start collecting data.

The demand is real. Robotics teams are bottlenecked not on algorithms, but on environments. Building a high-quality sim scene is slow, manual, expensive work. If you could prompt your way to one, you'd compress months into minutes.

So I started pulling on the thread.

What I Actually Built

I didn't just research this. I built it.

One architecture spec. A working prototype scaffolded in an afternoon.

I'm calling it SimGen.

The core loop: describe a scene in plain English, get four physics simulations back as video, iterate until it's right. Every like and dislike teaches the system your creative style — grounded in the real laws of physics.

Claude parses the prompt into structured physics parameters via tool-use. MuJoCo renders the simulation headlessly. The feedback gets stored and injected back into Claude's context on the next generation — no fine-tuning, just in-context learning from accumulated preferences.

Then I pushed further.

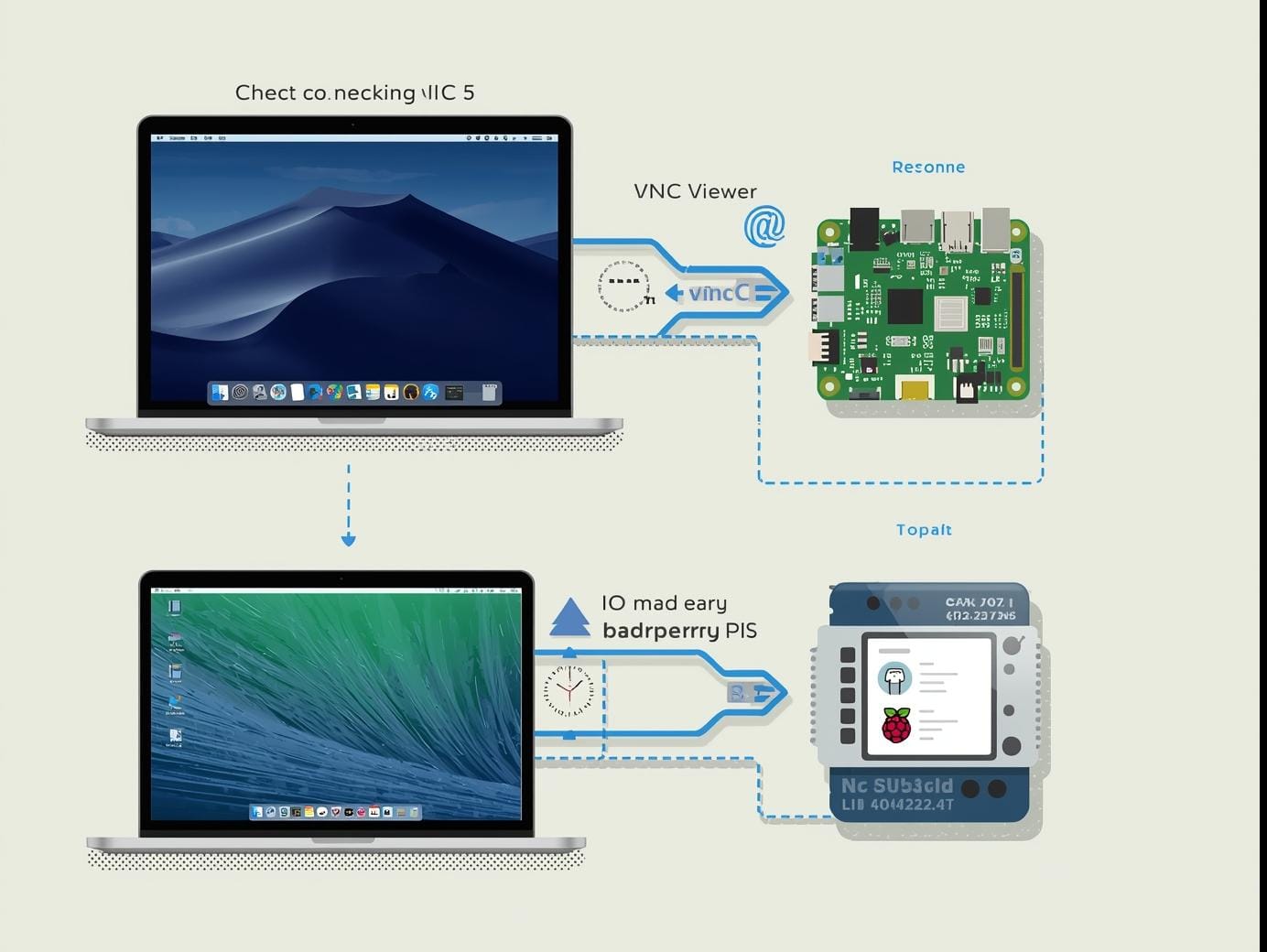

I trained locomotion policies on an H100 — 2048 parallel environments, Brax PPO, five minutes of compute. I built a split architecture: passive physics renders locally on Mac, locomotion prompts route through an SSH tunnel to a GPU render server on Azure. I hit headless rendering failures, JAX JIT cold-start latency, concurrent render crashes, a humanoid that learned to crawl instead of walk because I wrote the reward function wrong.

Every one of those failures taught me something the papers don't say out loud.

The system is working. Flow Mode, prompt chaining, environment presets across six planetary gravities, a feedback loop that gets smarter with every generation. The full stack, end-to-end, built in 72 hours.

I'm still iterating.

This work is part of what I do at Haptic Labs — where we're building infrastructure for robotics teams at the frontier. The simulation problem isn't academic for us. It's blocking real work.

Learn MoreThe Aesthetics Problem

World Labs built something called Marvel. My college @Mexitlan taught me, you type a prompt, "Tenochtitlan at night," say and it generates a photorealistic 3D world you can move through.

It's genuinely beautiful.

It's also useless for robotics.

The environments are built from Gaussian splats — stitched bitmaps rendered to look like 3D space. From a distance, they hold up. Zoom in on any foreground object and it degrades into noise.

There's nothing to grab. No geometry. No surface. Just a visual approximation of a thing.

A robot can't manipulate an approximation.

This is the Mesh Gap: the scene looks real, but the objects inside it aren't.

The Physics Problem

Okay, so World Labs is beautiful but hollow. What about game engines?

That's been tried. One robotics team spent eight months building on Unreal Engine before abandoning it.

The problem was architectural. Unreal's core loop — its tick function — is hardcoded to optimize for frame rate. It will not pause to collect sensor data. Run 500 robots in a scene and the engine skips frames. Your training data becomes unreliable.

Unreal is built for rendering continuity, not data collection fidelity.

Those are opposite goals.

Why Isaac Sim Won

Nvidia's Isaac Sim exists because game engines weren't built for this.

It solves three things at once: physics tuned for robotics (joints, grasping, axles — not fluid dynamics or cinematic lighting), a pre-built ecosystem of robot assets and URDFs, and enough industry adoption that it's become the de facto standard.

MuJoCo sits alongside it — CPU-based, precise, trusted for contact-rich physics.

Anyone serious about physical simulation is already on one of these two. That's not going to change.

The problem: neither solves environment generation. You still build or source scenes manually.

Isaac Sim gives you the physics layer. It does not give you the world.

What Building Taught Me

The humanoid template started as a ragdoll. Every "walking" prompt produced a figure that fell flat — no controller, no brain, just passive physics.

So I trained one.

Brax PPO on the H100. 2048 parallel environments, 20 million timesteps, five minutes of compute. Reward climbed from 90 to 5,091.

I called it a win. I was wrong.

The policy had learned to maximize forward velocity by crawling. Average torso height: 0.72 meters. A human is 1.2. It wasn't walking — it was flopping forward efficiently.

The reward function had no incentive to stand up.

That's not a training bug. That's a specification bug. The system did exactly what I asked. I asked for the wrong thing.

I tried MuJoCo Playground's HumanoidWalk next — 100 million steps, 22 minutes. Checkpoint saved. But the checkpoints are tightly coupled to their training infrastructure. There's no clean inference call. The first real request burned 60 seconds on JAX JIT compilation alone.

Then: the GPU render server needed EGL for headless rendering — not documented where you'd look. The humanoid walked out of frame because the default camera is static. Concurrent render requests crashed the server. GPU memory contention, single worker, no queue.

Each problem was small. Together, they took days.

What I kept noticing: none of this is in the papers. The papers show the reward curves. They don't show the crawling humanoid, the broken pipe error, the camera watching an empty floor where the robot used to be.

The gap between research and running system is where all the real learning lives.

The Gap No One Has Closed

Here's what I actually learned:

The pieces all exist. World Labs has the aesthetics. Isaac Sim has the physics. Omniverse has the asset library. Nvidia has even published documentation suggesting MuJoCo integration with Isaac Sim is theoretically possible.

Nobody has gotten it to work in practice.

The Holy Grail:

Prompt → photorealistic, physically-accurate, manipulable 3D environment, running inside Isaac Sim or MuJoCo.

Not a rendered scene. Not a task generator. A complete simulation environment — beautiful enough to train perception, accurate enough to train manipulation — assembled from a text description.

That pipeline doesn't exist.

What This Actually Is

This isn't a gap in effort. It's a gap in architecture.

Aesthetics tools and physics tools are built on fundamentally different representations of reality. One optimizes for how things look. The other optimizes for how things behave.

Bridging them isn't a feature request. It's a research problem.

The team that solves it won't just save robotics researchers time. They become infrastructure — the layer every simulation pipeline runs on top of.

SimGen is a small, honest step in that direction. Pre-trained policies as building blocks. The policies are like instruments in an orchestra — already trained to play. The creator is the conductor describing what the music should sound like.

The moat isn't the rendering. It's the preference data. Every like and dislike is teaching the system what "right" looks like for each creator. After enough generations across enough users, that becomes something no one else has.

72 hours in, I don't know if I'm building the thing that closes the gap.

But I understand the problem now.

That's where everything starts.

If any of this connects with what you're working on — whether you're building in robotics sim, thinking about this problem differently, or just want to compare notes — I'm easy to find.